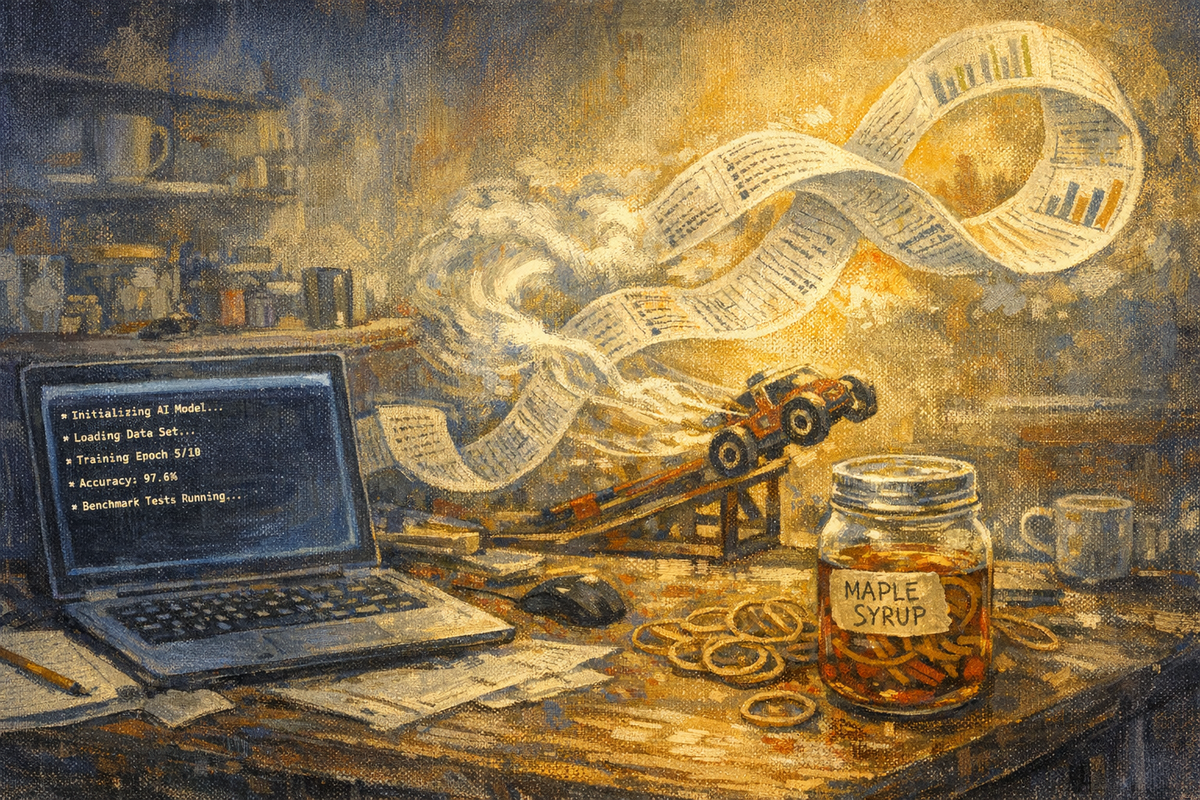

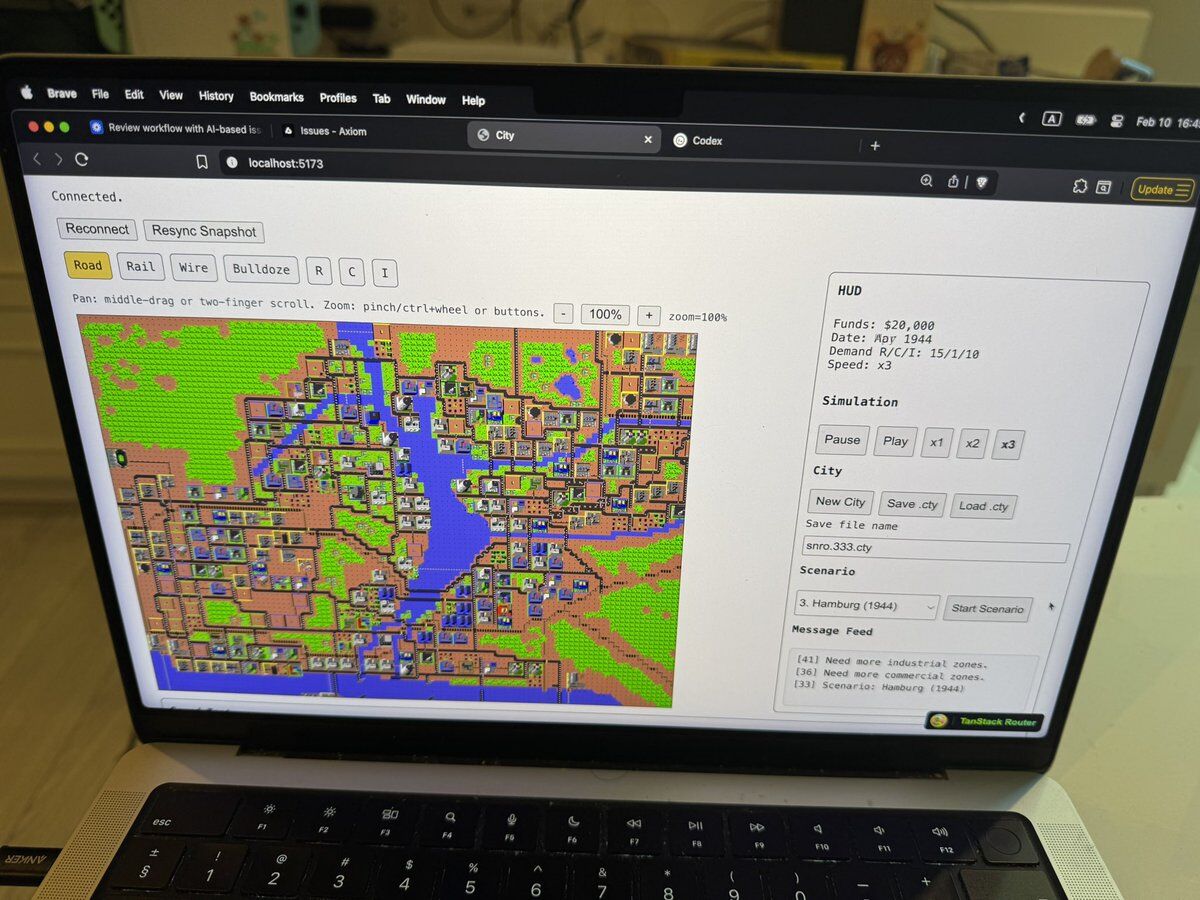

Karpathy’s autoresearch repo ran 83 ML experiments overnight on a single GPU, kept 15 improvements, and discarded the rest — all without a human touching the code. The human writes a Markdown file. The agent edits training code, trains for exactly 5 minutes per run, scores against a fixed metric, and loops. Three files, ~100 experiments, one night. The pattern from every post in this story crystallized: design the arena, let AI iterate.

Mar 08, 2026 · 7 min