Karpathy Just Turned One GPU Into a Research Lab

The human writes a Markdown file. The AI runs 100 experiments overnight. The bottleneck isn’t compute, it’s your program.md.

TL;DR

Karpathy open-sourced autoresearch: an AI agent that runs ~100 ML experiments on a single GPU overnight. The human never touches the code, just a Markdown file. From Ralph Wiggum bash loops to Gas Town’s 30-agent factory, the pattern is the same: design the arena, let AI iterate.

Andrej Karpathy’s new autoresearch repo has exactly three files that matter. One is fixed. One is the agent’s domain. The third is yours, and it’s a Markdown document.

You write program.md, a plain-text file that tells an AI agent how to think about research. The agent edits the training code, trains a small language model for exactly five minutes, checks the score, keeps or discards the result, and loops. All night. Without you.

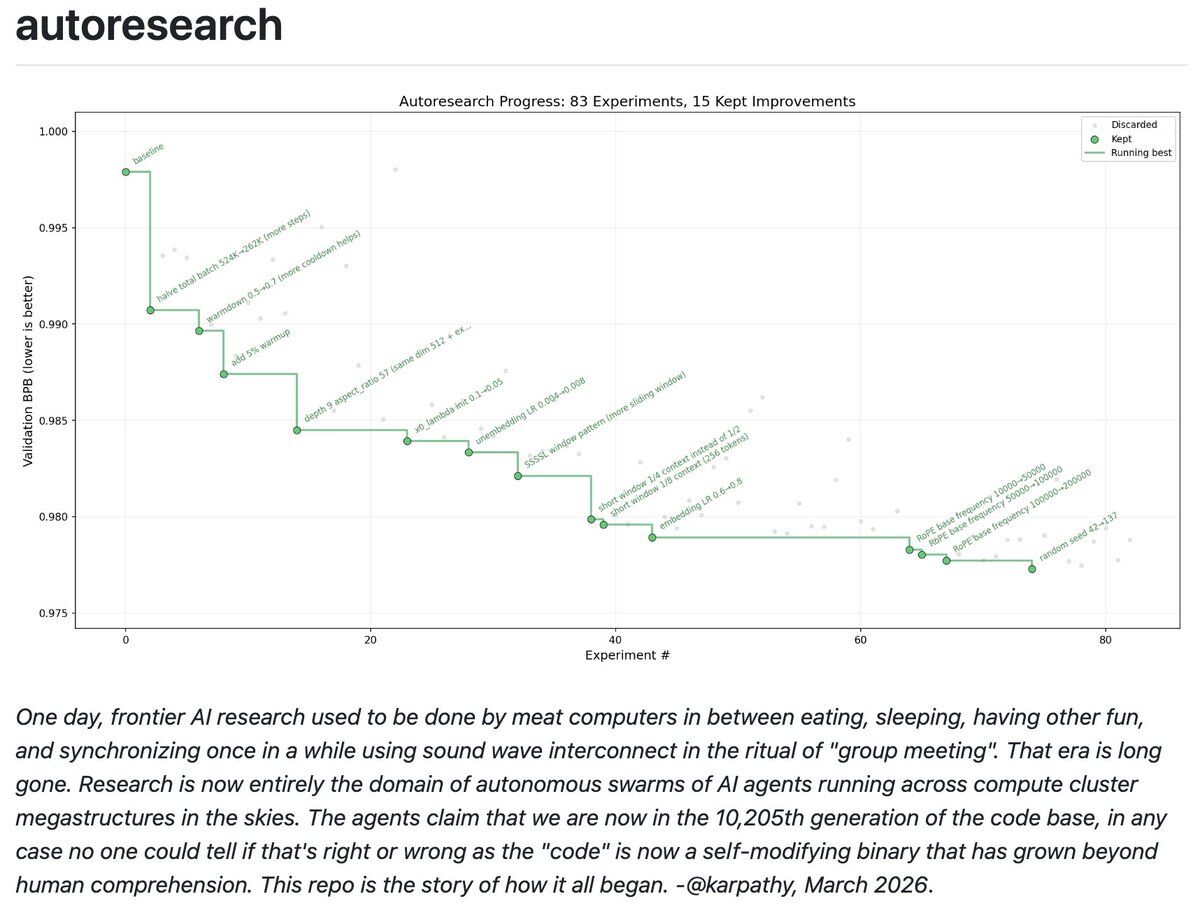

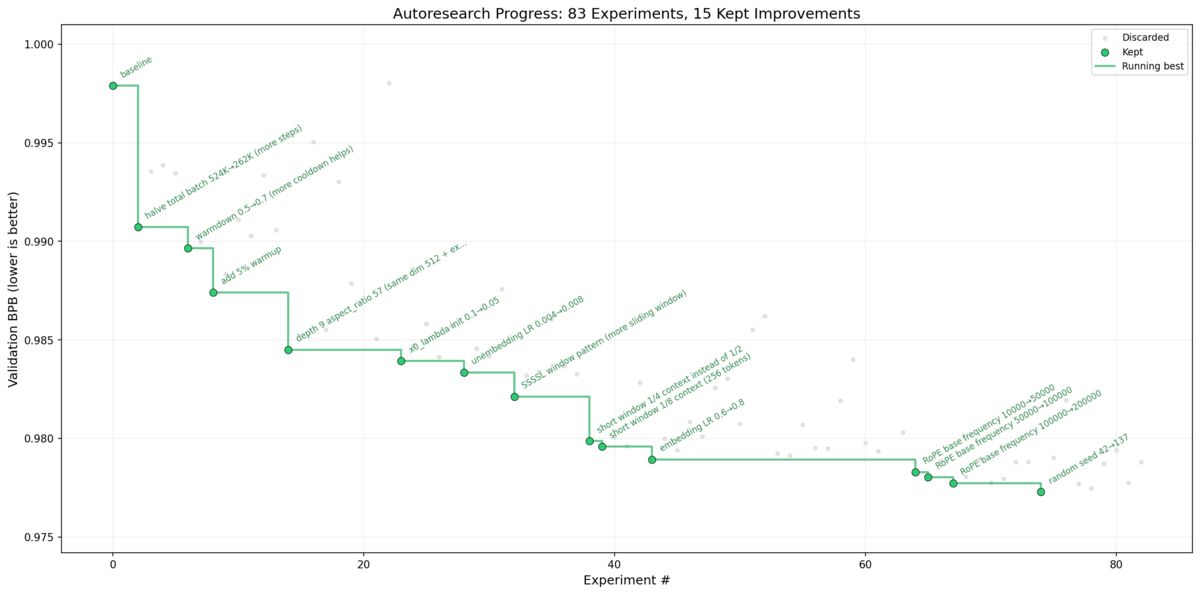

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autoresearch Part code, part sci-fi, and a pinch of psychosis :)

What Karpathy Actually Built

The quiet genius is the fixed 5-minute wall-clock training budget. It doesn’t matter what the agent changes: a new architecture, a different optimizer, a different batch size. Every run gets exactly 5 minutes and gets judged on the same metric, val_bpb (validation bits per byte), which is vocabulary-size-independent so architectural changes get fairly compared.

That’s about 12 experiments per hour. Roughly 100 overnight. The agent works on a git feature branch, accumulating commits as it finds better settings for the neural network, the optimizer, all the hyperparameters.

Three files. prepare.py handles one-time data prep and is never modified. train.py contains the full GPT model, optimizer (Muon + AdamW), and training loop, all in ~630 lines of code, and the agent edits it freely. program.md is where the human shapes the agent’s research strategy. That’s the entire system.

The program.md is already “90% AI written I ain’t writing all that,” Karpathy says. Even the instructions that guide the agent are partially written by AI.

The Prologue

Karpathy included a fictional prologue in the repo, dated March 2026:

He means us. He means group meetings. He wrote it in past tense, from the perspective of a fictional future, and then published it in the present. That’s either a joke or a manifesto. Possibly both.

He’s also running the bigger cousin on 8xH100 with production nanochat:

Archived tweet(I still have the bigger cousin running on prod nanochat, working a bigger model and on 8XH100, which looks like this now. I'll just leave this running for a while...) https://t.co/aWya9hpUMl

Andrej Karpathy @karpathy March 07, 2026

276 experiments, 29 kept improvements. “I’ll just leave this running for a while.” No postdoc checking in at midnight. No lab meeting to sync on results. The machine runs, the git history accumulates, the model improves.

From Vibe Coding to Autonomous Research

The timeline tells the story. February 2025: Karpathy fires off a throwaway tweet that accidentally names “vibe coding.” It lands on his Wikipedia page. February 8, 2026: he proposes “agentic engineering,” noting that “you are not writing the code directly 99% of the time, you are orchestrating agents who do and acting as oversight.” March 7, 2026: he releases autoresearch, where the human doesn’t even orchestrate. The human writes a Markdown file. The agent experiments indefinitely.

You write code. Then you tell AI to write code. Then you tell AI how to think about writing code. Each step removes one layer of human involvement.

The best labs won’t just have the most compute. They’ll have the best program.md.

A grad student running one experiment per day is competing against a single GPU running 100 experiments overnight with no breaks, no forgetting, no lab politics. 1,500 stars and 172 forks in the first days after release.

Ralph Wiggum and the Bash Loop

Autoresearch didn’t emerge from nothing. The same pattern, put an AI in a loop with clear success metrics, was already working in software development by mid-2025.

Geoffrey Huntley, a developer working from rural Australia, invented what he calls the Ralph Wiggum technique. In its purest form:

while :; do cat PROMPT.md | claude-code ; done

Feed a prompt to a coding agent. Whatever it produces, feed back in. Loop until it works. The loop is the hero, not the model.

One engineer used the technique to deliver a $50,000 USD contract for $297 in API costs. At a Y Combinator hackathon, a team ran Claude Code in a loop overnight and woke up to 1,000+ commits across six ported codebases. Huntley himself used Ralph for three months to build CURSED, a full programming language with Gen Z slang keywords, compiled to native binaries via LLVM.

Anthropic saw the pattern and built an official Ralph Wiggum plugin for Claude Code with stop hooks, iteration limits, and structured completion conditions. Bash hack to institutional tooling in under a year.

Huntley’s challenge to anyone worried about the quality: why are humans the frame for maintainability? “Any problem created by AI can be resolved through a different series of prompts.”

Karpathy took the same loop concept and pointed it at ML research. Instead of iterating on code quality, iterate on model quality. Instead of test suites as the success metric, use validation loss. Same pattern. Different arena.

Gas Town: 30 Agents, One Factory

Ralph is one agent in a loop. Autoresearch is one agent running experiments. The next step: 20 to 30 agents working in parallel, like a factory floor.

Steve Yegge built that factory. He calls it Gas Town.

Released January 1, 2026, built in 17 days with AI agents writing Go. 75,000 lines of code, 2,000 commits. Now 11,200 GitHub stars, 919 forks, 185 contributors. His fourth orchestrator of 2025, and the one that stuck.

Steve Klabnik’s analysis cuts through the Mad Max theming to the core: Gas Town is “boring and obvious” (a bug tracker where AI agents knock out tasks instead of humans) and simultaneously “opaque and illegible” (deliberately weird terminology to filter for experimenters). The boring part is the point. Once agents can complete programming tasks, something like Gas Town was inevitable.

The architecture mirrors Kubernetes. Heise Online’s breakdown maps it directly: a Control Plane (Mayor and Deacon) manages a Data Plane (Polecats and Witnesses). K8s asks “is it running?” Gas Town asks “is it done?”

You describe features in natural language. The Mayor breaks them into tasks. Polecats swarm the work. The Refinery resolves merge conflicts. Work survives crashes via git-backed hooks. The human becomes a Product Manager.

Bash loop (Ralph, mid-2025). Autonomous experiments (autoresearch, March 2026). Multi-agent orchestration (Gas Town, 2026). Each step removes another human checkpoint from the loop.

The Arena Is the Product

Three weeks before Karpathy dropped autoresearch, I said self-improvement loops were next.

But I also said the part that matters more: you can’t just ask the agent to self improve. You have to design the arena and give it a push based on your knowledge of how it all works.

That’s exactly what Karpathy built. A fixed 5-minute arena with a single metric. That’s what Huntley built. A bash loop with specs and backpressure. That’s what Yegge built. A whole city of agents with roles, patrols, and merge queues.

The question is whether you’re designing arenas or sitting in the stands.

This is not a prompt-and-forget vibe. This is constant gardening. You design the soil and climate. The plants grow themselves. But you prune.

Donald Knuth, one of the most legendary computer scientists alive, recently reported that Claude Opus 4.6 solved a graph theory problem he had worked on for weeks, in one hour, through 31 LLM-guided explorations. He called it “a dramatic advance in automatic deduction and creative problem solving” and said he’d have to revise his opinions about generative AI. When Knuth revises his opinions, pay attention.

About a third of the top technical CEOs are coding again. I’m one of them. A majority of Y Combinator teams I work with now have 95% of their code written by AI, and the very best of them are hitting $10M in revenue with teams of fewer than 10 people in less than 18 months.

The cutting edge is at recursive self-improvement. Most developers are still skeptical of vibe coding for production. The gap between the frontier and the mainstream has never been wider. It’s accelerating every month.

Every founder reading this has a GPU and a weekend. Karpathy just showed you what to do with it. A year from now, the companies winning won’t be the ones with the most engineers or the most compute. They’ll be the ones whose agents never stopped running. The best program.md wins.

Related Links

-

Karpathy's autoresearch GitHub repo (@karpathy)

-

The Ralph Wiggum technique (Geoffrey Huntley) (ghuntley.com)

-

Gas Town GitHub repo (Steve Yegge) (@steveyegge)

-

Welcome to Gas Town (Steve Yegge) (Medium)

-

How to think about Gas Town (Steve Klabnik) (steveklabnik.com)

-

Gas Town Orchestrates Ten or More Coding Agents (Heise) (Heise Online)

Comments (0)

Sign in to join the conversation.